We are a team of three guys (so far) with a design idea for a simple yet challenging racing/strategy game based on real-world geospatial data coupled with a 3D gaming engine. Tonight we started building it together in Unity after downloading a bunch of public geospatial datasets. Here’s a summary of what we’ve done and some basic screenshots. The goal is to make progress every couple days to show we is possible with a little work and a combination of creative, technical and group management skillsets.

First Images

Starting with Geography

The landscape of our 3D game is built using real world geographic information – aka geospatial data. We wanted this so that we could build games around real locales and market the game to local users. One side benefit is the players will learn a little about Canadian geography!

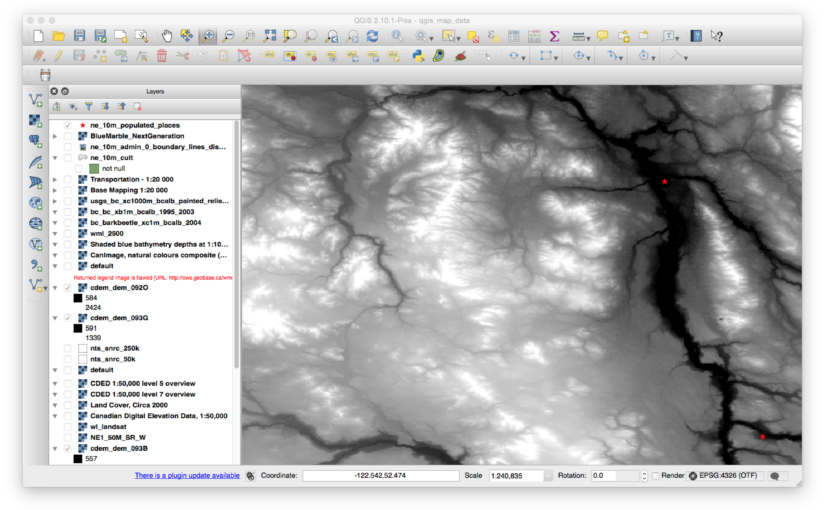

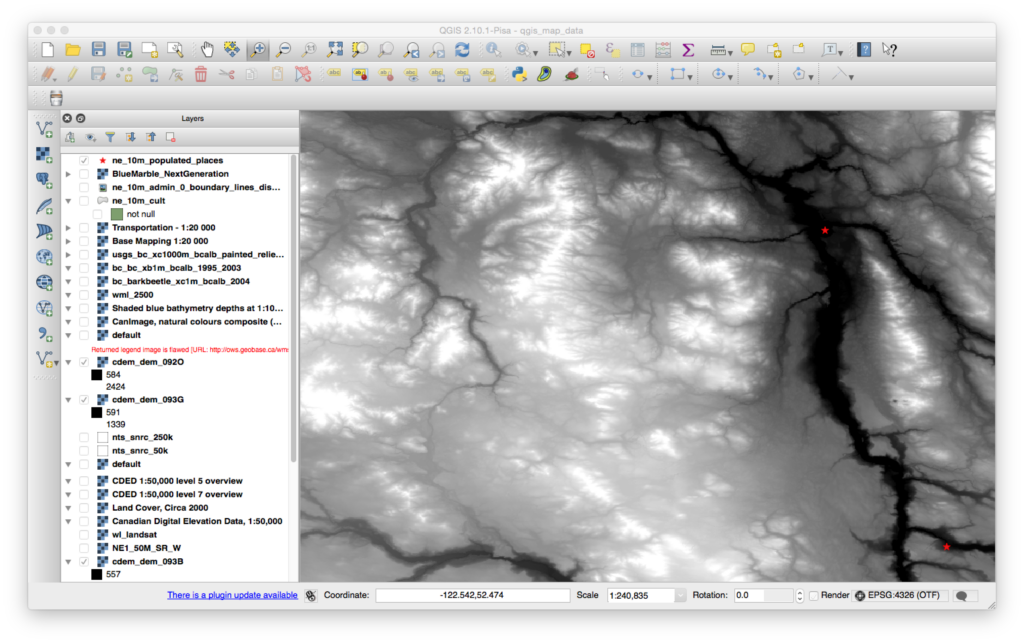

We’ve got a great collection of geo data for all of Canada available through the government Geogratis website. After we’ve decided what map tile numbers we needed, we grab the CDED data which is a TIFF elevation image file with geographic coordinates included.

We also found a BC provincial shaded relief map layer that was pretty nice as a starter. As both these data files are geolocated we can load the data into GIS (geographic information system) software from QGIS.org and combine it with any other data we have for the region. In our case we have a “populated places” point file from naturalearthdata.org that we show as stars on top of the shaded relief map.

Then we export the elevation data and the relief data (including the stars) into two files for Unity to ingest. The result is one PNG that is going to be our texture and the other will be the heightmap for the terrain.

Loading into Unity

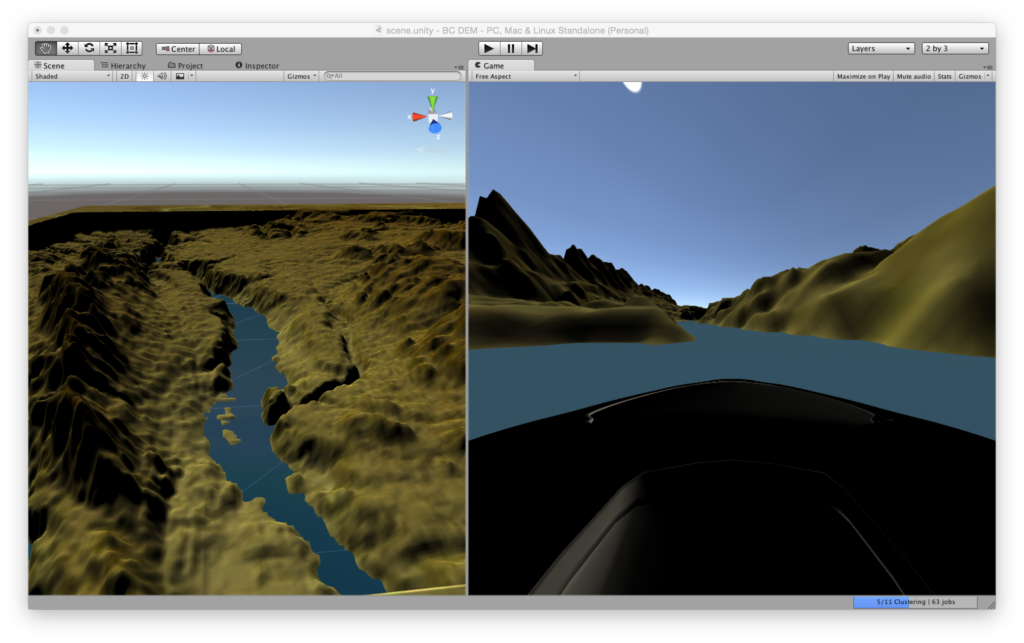

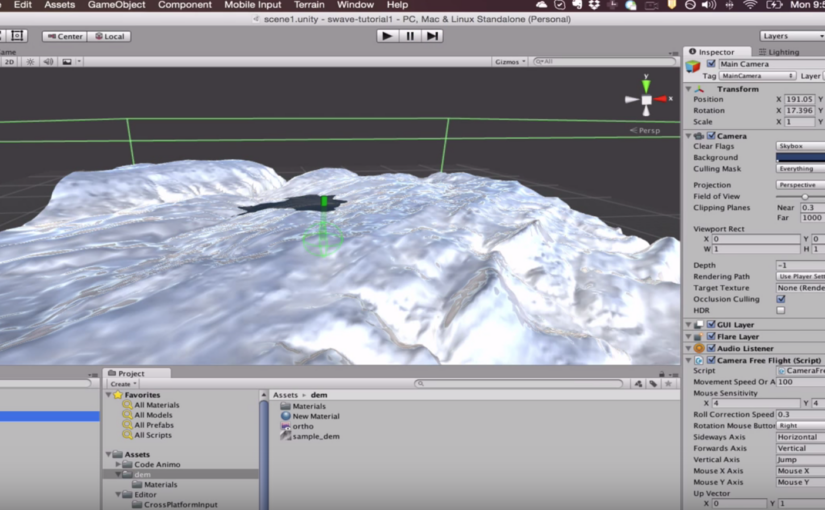

We created a terrain object with some pretty huge dimensions and height. There are mountains in the region we are working with, so it’s pretty cool to see. We apply the heightmap using a commonly used Unity script (I’ll have to put the link here when I remember where I got it!).

Then we add the shade relief map as a texture to the terrain et voila! We threw in a plane of basic water and raised it to the level where it filled the main river valleys. As our game is going to first start as a river racing game, we want to start with water from the very beginning.

We added a car, parented it to the camera and were racing down our waterways within 2 hours of starting. We spent a lot of time adjusting sizes of terrains and texture so we could try to match real-world scale. There are some further ideas we are going to try to optimise this as well as nail down a workflow for easily ingesting new geodata for other regions (as we had to manually export and adjust things in the GIS).

Reposted from our Indiedb blog, hence writing for a slightly non-geo audience: http://www.indiedb.com/members/1tylermitchell/blogs/edit/1mitchellco-day-1-cross-country-river-run-race-game